7. First Order Logic¶

Propositional logic provides a good start at describing the general principles of logical reasoning, but it does not go far enough. Some of the limitations are apparent even in the “Malice and Alice” example from Chapter 2. Propositional logic does not give us the means to express a general principle that tells us that if Alice is with her son on the beach, then her son is with Alice; the general fact that no child is older than his or her parent; or the general fact that if someone is alone, they are not with someone else. To express principles like these, we need a way to talk about objects and individuals, as well as their properties and the relationships between them. These are exactly what is provided by a more expressive logical framework known as first-order logic, which will be the topic of the next few chapters.

7.1. Functions, Predicates, and Relations¶

Consider some ordinary statements about the natural numbers:

Every natural number is even or odd, but not both.

A natural number is even if and only if it is divisible by two.

If some natural number, \(x\), is even, then so is \(x^2\).

A natural number \(x\) is even if and only if \(x + 1\) is odd.

Any prime number that is greater than 2 is odd.

For any three natural numbers \(x\), \(y\), and \(z\), if \(x\) divides \(y\) and \(y\) divides \(z\), then \(x\) divides \(z\).

These statements are true, but we generally do not think of them as logically valid: they depend on assumptions about the natural numbers, the meaning of the terms “even” and “odd,” and so on. But once we accept the first statement, for example, it seems to be a logical consequence that the number of stairs in the White House is either even or odd, and, in particular, if it is not even, it is odd. To make sense of inferences like these, we need a logical system that can deal with objects, their properties, and relations between them.

Rather than fix a single language once and for all, first-order logic allows us to specify the symbols we wish to use for any given domain of interest. In this section, we will use the following running example:

The domain of interest is the natural numbers, \(\mathbb{N}\).

There are objects, \(0\), \(1\), \(2\), \(3\), ….

There are functions, addition and multiplication, as well as the square function, on this domain.

There are predicates on this domain, “even,” “odd,” and “prime.”

There are relations between elements of this domain, “equal,” “less than”, and “divides.”

For our logical language, we will choose symbols 1, 2, 3, \(\mathit{add}\), \(\mathit{mul}\), \(\mathit{square}\), \(\mathit{even}\), \(\mathit{odd}\), \(\mathit{prime}\), \(\mathit{lt}\), and so on, to denote these things. We will also have variables \(x\), \(y\), and \(z\) ranging over the natural numbers. Note all of the following.

Functions can take different numbers of arguments: if \(x\) and \(y\) are natural numbers, it makes sense to write \(\mathit{mul}(x, y)\) and \(\mathit{square}(x)\). So \(\mathit{mul}\) takes two arguments, and \(\mathit{square}\) takes only one.

Predicates and relations can also be understood in these terms. The predicates \(\mathit{even}(x)\) and \(\mathit{prime}(x)\) take one argument, while the binary relations \(\mathit{divides}(x, y)\) and \(\mathit{lt}(x,y)\) take two arguments.

Functions are different from predicates! A function takes one or more arguments, and returns a value. A predicate takes one or more arguments, and is either true or false. We can think of predicates as returning propositions, rather than values.

In fact, we can think of the constant symbols \(1, 2, 3, \ldots\) as special sorts of function symbols that take zero arguments. Analogously, we can consider the predicates that take zero arguments to be the constant logical values, \(\top\) and \(\bot\).

In ordinary mathematics, we often use “infix” notation for binary functions and relations. For example, we usually write \(x \times y\) or \(x \cdot y\) instead of \(\mathit{mul}(x, y)\), and we write \(x < y\) instead of \(\mathit{lt}(x, y)\). We will use these conventions when writing proofs in natural deduction, and they are supported in Lean as well.

We will treat the equality relation, \(x = y\), as a special binary relation that is included in every first-order language.

First-order logic allows us to build complex expressions out of the basic ones. Starting with the variables and constants, we can use the function symbols to build up compound expressions like these:

\(x + y + z\)

\((x + 1) \times y \times y\)

\(\mathit{square} (x + y \times z)\)

Such expressions are called “terms.” Intuitively, they name objects in the intended domain of discourse.

Now, using the predicates and relation symbols, we can make assertions about these expressions:

\(\mathit{even}(x + y + z)\)

\(\mathit{prime}((x + 1) \times y \times y)\)

\(\mathit{square}(x + y \times z) = w\)

\(x + y < z\)

Even more interestingly, we can use propositional connectives to build compound expressions like these:

\(\mathit{even}(x + y + z) \wedge \mathit{prime}((x + 1) \times y \times y)\)

\(\neg (\mathit{square} (x + y \times z) = w) \vee x + y < z\)

\(x < y \wedge \mathit{even}(x) \wedge \mathit{even}(y) \to x + 1 < y\)

The second one, for example, asserts that either \((x + yz)^2\) is not equal to \(w\), or \(x + y\) is less than \(z\). Remember, these are expressions in symbolic logic; in ordinary mathematics, we would express the notions using words like “is even” and “if and only if,” as we did above. We will use notation like this whenever we are in the realm of symbolic logic, for example, when we write proofs in natural deduction. Expressions like these are called formulas. In contrast to terms, which name things, formulas say things; in other words, they make assertions about objects in the domain of discourse.

7.2. The Universal Quantifier¶

What makes first-order logic powerful is that it allows us to make general assertions using quantifiers. The universal quantifier \(\forall\) followed by a variable \(x\) is meant to represent the phrase “for every \(x\).” In other words, it asserts that every value of \(x\) has the property that follows it. Using the universal quantifier, the examples with which we began the previous section can be expressed as follows:

\(\forall x \; ((\mathit{even}(x) \vee \mathit{odd}(x)) \wedge \neg (\mathit{even}(x) \wedge \mathit{odd}(x)))\)

\(\forall x \; (\mathit{even}(x) \leftrightarrow 2 \mid x)\)

\(\forall x \; (\mathit{even}(x) \to \mathit{even}(x^2))\)

\(\forall x \; (\mathit{even}(x) \leftrightarrow \mathit{odd}(x+1))\)

\(\forall x \; (\mathit{prime}(x) \wedge x > 2 \to \mathit{odd}(x))\)

\(\forall x \; \forall y \; \forall z \; (x \mid y \wedge y \mid z \to x \mid z)\)

It is common to combine multiple quantifiers of the same kind, and write, for example, \(\forall x, y, z \; (x \mid y \wedge y \mid z \to x \mid z)\) in the last expression.

Here are some notes on syntax:

In symbolic logic, the universal quantifier is usually taken to bind tightly. For example, \(\forall x \; P \vee Q\) is interpreted as \((\forall x \; P) \vee Q\), and we would write \(\forall x \; (P \vee Q)\) to extend the scope.

Be careful, however. In other contexts, especially in computer science, people often give quantifiers the widest scope possible. This is the case with Lean. For example,

∀ x, P ∨ Qis interpreted as∀ x, (P ∨ Q), and we would write(∀ x, P) ∨ Qto limit the scope.When you put the quantifier \(\forall x\) in front a formula that involves the variable \(x\), all the occurrences of that variable are bound by the quantifier. For example, the expression \(\forall x \; (\mathit{even}(x) \vee \mathit{odd}(x))\) is expresses that every number is even or odd. Notice that the variable \(x\) does not appear anywhere in the informal statement. The statement is not about \(x\) at all; rather \(x\) is a dummy variable, a placeholder that stands for the “thing” referred to within a phrase that beings with the words “every thing.” We think of the expression \(\forall x \; (\mathit{even}(x) \vee \mathit{odd}(x))\) as being the same as the expression \(\forall y \; (\mathit{even}(y) \vee \mathit{odd}(y))\). Lean also treats these expressions as the same.

In Lean, the expression

∀ x y z, x ∣ y → y ∣ z → x ∣ zis interpreted as∀ x y z, x ∣ y → (y ∣ z → x ∣ z), with parentheses associated to the right. The part of the expression after the universal quantifier can therefore be interpreted as saying “given thatxdividesyand thatydividesz,xdividesz.” The expression is logically equivalent to∀ x y z, x ∣ y ∧ y ∣ z → x ∣ z, but we will see that, in Lean, it is often convenient to express facts like this as an iterated implication.

A variable that is not bound is called free. Notice that formulas in first-order logic say things about their free variables. For example, in the interpretation we have in mind, the formula \(\forall y \; (x \le y)\) says that \(x\) is less than or equal to every natural number. The formula \(\forall z \; (x \le z)\) says exactly the same thing; we can always rename a bound variable, as long as we pick a name that does not clash with another name that is already in use. On the other hand, the formula \(\forall y (w \le y)\) says that \(w\) is less than or equal to every natural number. This is an entirely different statement: it says something about \(w\), rather than \(x\). So renaming a free variable changes the meaning of a formula.

Notice also that some formulas, like \(\forall x, y \; (x \le y \vee y \le x)\), have no free variables at all. Such a formula is called a sentence, because it makes an outright assertion, a statement that is either true or false about the intended interpretation. In Chapter 10 we will make the notion of an “intended interpretation” precise, and explain what it means to be “true in an interpretation.” For now, the idea that formulas say things about an object in an intended interpretation should motivate the rules for reasoning with such expressions.

In Chapter 1 we proved that the square root of two is irrational. One way to construe the statement is as follows:

For every pair of integers, \(a\) and \(b\), if \(b \ne 0\), it is not the case that \(a^2 = 2 b^2\).

The advantage of this formulation is that we can restrict our attention to the integers, without having to consider the larger domain of rationals. In symbolic logic, assuming our intended domain of discourse is the integers, we would express this theorem using the universal quantifier:

Notice that we have kept the conventional mathematical notation \(b \ne 0\) to say that \(b\) is not equal to 0, but we can think of this as an abbreviation for \(\neg (b = 0)\). How do we prove such a theorem? Informally, we would use such a pattern:

Let \(a\) and \(b\) be arbitrary integers, suppose \(b \ne 0\), and suppose \(a^2 = 2 b^2\).

…

Contradiction.

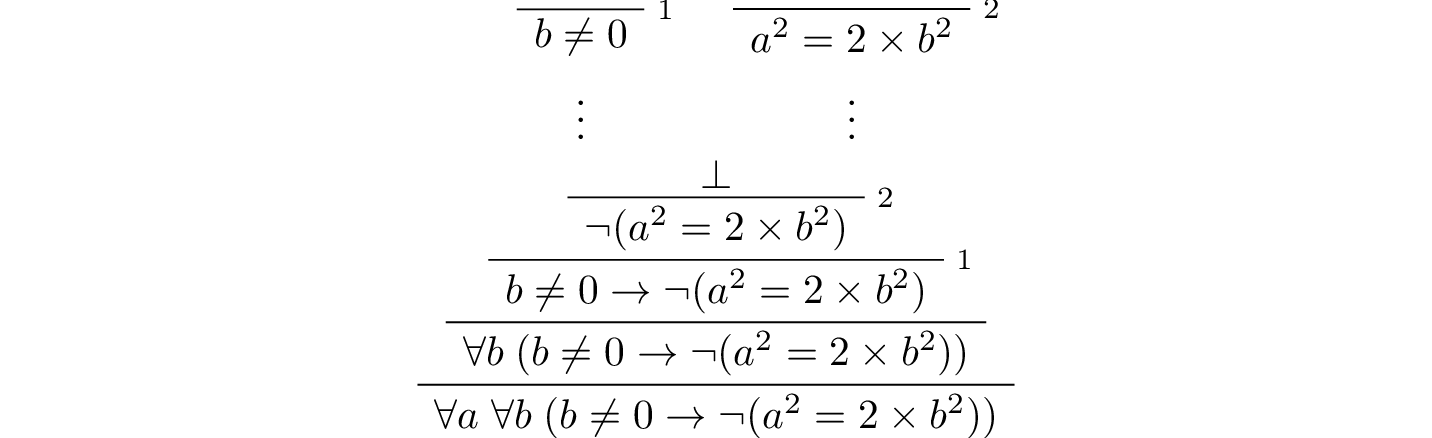

What we are really doing is proving that the universal statement holds, by showing that it holds of “arbitrary” values \(a\) and \(b\). In natural deduction, the proof would look something like this:

Notice that after the hypotheses are canceled, we have proved \(b \ne 0 \to \neg (a^2 = 2 \times b^2)\) without making any assumptions about \(a\) and \(b\); at this stage in the proof, they are “arbitrary,” justifying the application of the universal quantifiers in the next two rules.

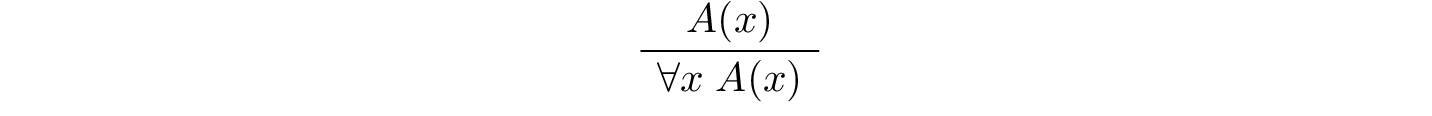

This example motivates the following rule in natural deduction:

provided \(x\) is not free in any uncanceled hypothesis. Here \(A(x)\) stands for any formula that (potentially) mentions \(x\). Also remember that if \(y\) is any “fresh” variable that does not occur in \(A\), we are thinking of \(\forall x \; A(x)\) as being the same as \(\forall y \; A(y)\).

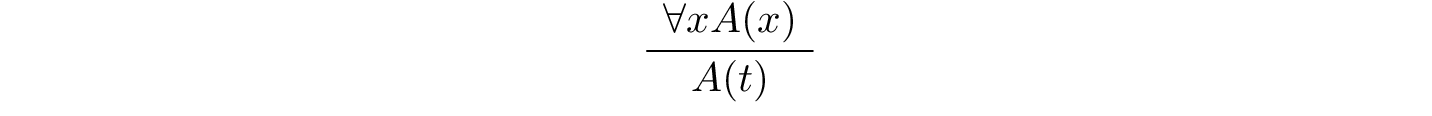

What about the elimination rule? Suppose we know that every number is even or odd. Then, in an ordinary proof, we are free to assert “\(a\) is even or \(a\) is odd,” or “\(a^2\) is even or \(a^2\) is odd.” In terms of symbolic logic, this amounts to the following inference: from \(\forall x \; (\mathit{even}(x) \vee \mathit{odd}(x))\), we can conclude \(\mathit{even}(t) \vee \mathit{odd}(t)\) for any term \(t\). This motivates the elimination rule for the universal quantifier:

where \(t\) is an arbitrary term, subject to the restriction described at the end of the next section.

In a sense, this feels like the elimination rule for implication; we might read the hypothesis as saying “if \(x\) is any thing, then \(x\) is even or odd.” The conclusion is obtained by applying it to the fact that \(n\) is a thing. Note that, in general, we could replace \(n\) by any term in the language, like \(n (m + 5) +2\). Similarly, the introduction rule feels like the introduction rule for implication. If we want to show that everything has a certain property, we temporarily let \(x\) denote an arbitrary thing, and then show that it has the relevant property.

7.3. The Existential Quantifier¶

Dual to the universal quantifier is the existential quantifier, \(\exists\), which is used to express assertions such as “some number is even,” or, “between any two even numbers there is an odd number.”

The following statements about the natural numbers assert the existence of some natural number:

There exists an odd composite number. (Remember that a natural number is composite if it is greater than 1 and not prime.)

Every natural number greater than one has a prime divisor.

For every \(n\), if \(n\) has a prime divisor smaller than \(n\), then \(n\) is composite.

These statements can be expressed in first-order logic using the existential quantifier as follows:

\(\exists n\; (\mathit{odd}(n) \wedge \mathit{composite}(n))\)

\(\forall n \; (n > 1 \to \exists p \; (\mathit{prime}(p) \wedge p \mid n))\)

\(\forall n \; ((\exists p \; (p \mid n \wedge \mathit{prime}(p) \wedge p < n)) \to \mathit{composite}(n))\)

After we write \(\exists n\), the variable \(n\) is bound in the formula, just as for the universal quantifier. So the formulas \(\exists n \; \mathit{composite}(n)\) and \(\exists m \; \mathit{composite}(m)\) are considered the same.

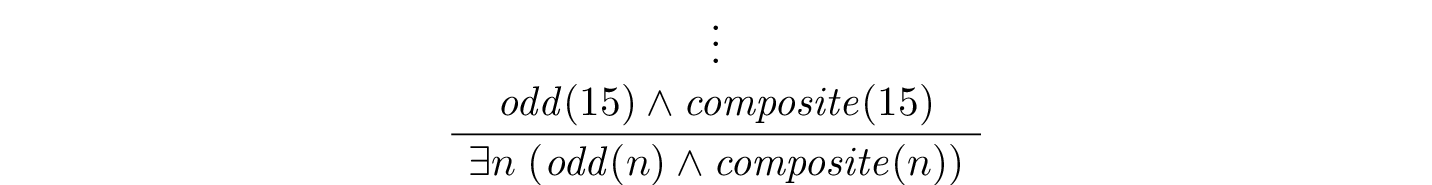

How do we prove such existential statements? Suppose we want to prove that there exists an odd composite number. To do this, we just present a candidate, and show that the candidate satisfies the required properties. For example, we could choose 15, and then show that 15 is odd and that 15 is composite. Of course, there’s nothing special about 15, and we could have proven it also using a different number, like 9 or 35. The choice of candidate does not matter, as long as it has the required property.

In a natural deduction proof this would look like this:

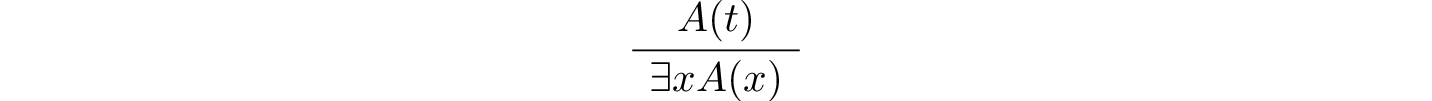

This illustrates the introduction rule for the existential quantifier:

where \(t\) is any term, subject to the restriction described below. So to prove an existential formula, we just have to give one particular term for which we can prove that formula. Such term is called a witness for the formula.

What about the elimination rule? Suppose that we know that \(n\) is some natural number and we know that there exists a prime \(p\) such that \(p < n\) and \(p \mid n\). How can we use this to prove that \(n\) is composite? We can reason as follows:

Let \(p\) be any prime such that \(p < n\) and \(p \mid n\).

…

Therefore, \(n\) is composite.

First, we assume that there is some \(p\) which satisfies the properties \(p\) is prime, \(p < n\) and \(p \mid n\), and then we reason about that \(p\). As with case-based reasoning using “or,” the assumption is only temporary: if we can show that \(n\) is composite from that assumption, that we have essentially shown that \(n\) is composite assuming the existence of such a \(p\). Notice that in this pattern of reasoning, \(p\) should be “arbitrary.” In other words, we should not have assumed anything about \(p\) beforehand, we should not make any additional assumptions about \(p\) along the way, and the conclusion should not mention \(p\). Only then does it makes sense to say that the conclusion follows from the “mere” existence of a \(p\) with the assumed properties.

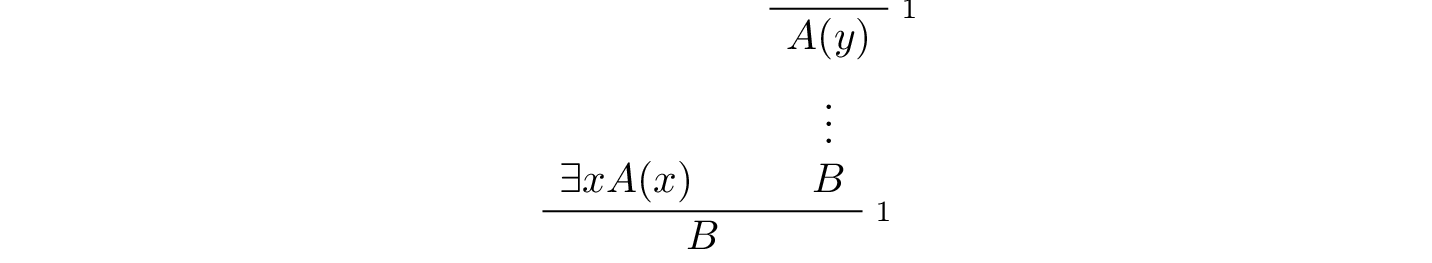

In natural deduction, the elimination rule is expressed as follows:

Here we require that \(y\) is not free in \(B\), and that the only uncanceled hypotheses where \(y\) occurs freely are the hypotheses \(A(y)\) that are canceled when you apply this rule. Formally, this is what it means to say that \(y\) is “arbitrary.” As was the case for or elimination and implication introduction, you can use the hypothesis \(A(y)\) multiple times in the proof of \(B\), and cancel all of them at once. Intuitively, the rule says that you can prove \(B\) from the assumption \(\exists x A(x)\) by assuming \(A(y)\) for a fresh variable \(y\), and concluding, in any number of steps, that \(B\) follows. You should compare this rule to the rule for or elimination, which is somewhat analogous.

There is a restriction on the term \(t\) that appears in the elimination rule for the universal quantifier and the introduction rule for the existential quantifier, namely, that no variable that appears in \(t\) becomes bound when you plug it in for \(x\). To see what can go wrong if you violate this restriction, consider the sentence \(\forall x \; \exists y \; y > x\). If we interpret this as a statement about the natural numbers, it says that for every number \(x\), there is a bigger number \(y\). This is a true statement, and so it should hold whatever we substitute for \(x\). But what happens if we substitute \(y + 1\)? We get the statement \(\exists y \; y > y + 1\), which is false. The problem is that before the substitution the variable \(y\) in \(y + 1\) refers to an arbitrary number, but after the substitution, it refers to the number that is asserted to exist by the existential quantifier, and that is not what we want.

Violating the restriction in the introduction rule for the existential quantifier causes similar problems. For example, it allows us to derive \(\exists x \; \forall y \; y = x\), which says that there is exactly one number, from the hypothesis \(\forall y \; y = y\). The good news is that if you rely on your intuition, you are unlikely to make mistakes like these. But it is an important fact that the rules of natural deduction can be given a precise specification that rules out these invalid inferences.

7.4. Relativization and Sorts¶

In first-order logic as we have presented it, there is one intended “universe” of objects of discourse, and the universal and existential quantifiers range over that universe. For example, we could design a language to talk about people living in a certain town, with a relation \(\mathit{loves}(x, y)\) to express that \(x\) loves \(y\). In such a language, we might express the statement that “everyone loves someone” by writing \(\forall x \; \exists y \; \mathit{loves}(x, y)\).

You should keep in mind that, at this stage, \(\mathit{loves}\) is just a symbol. We have designed the language with a certain interpretation in mind, but one could also interpret the language as making statements about the natural numbers, where \(\mathit{loves}(x, y)\) means that \(x\) is less than or equal to \(y\). In that interpretation, the sentence

is true, though in the original interpretation it makes an implausible claim about the nature of love triangles. In Chapter 10, we will spell out the notion that the deductive rules of first-order logic enable us to determine the statements that are true in all interpretations, just as the rules of propositional logic enable us to determine the statements that are true under all truth assignments.

Returning to the original example, suppose we want to represent the statement that, in our town, all the women are strong and all the men are good looking. We could do that with the following two sentences:

\(\forall x \; (\mathit{woman}(x) \to \mathit{strong}(x))\)

\(\forall x \; (\mathit{man}(x) \to \mathit{good{\mathord{\mbox{-}}}looking}(x))\)

These are instances of relativization. The universal quantifier ranges over all the people in the town, but this device gives us a way of using implication to restrict the scope of our statements to men and women, respectively. The trick also comes into play when we render “every prime number greater than two is odd”:

We could also read this more literally as saying “for every number \(x\), if \(x\) is prime and \(x\) is greater than to 2, then \(x\) is odd,” but it is natural to read it as a restricted quantifier.

It is also possible to relativize the existential quantifier to say things like “some woman is strong” and “some man is good-looking.” These are expressed as follows:

\(\exists x \; (\mathit{woman}(x) \wedge \mathit{strong}(x))\)

\(\exists x \; (\mathit{man}(x) \wedge \mathit{good\mathord{\mbox{-}}looking}(x))\)

Notice that although we used implication to relativize the universal quantifier, here we need to use conjunction instead of implication. The expression \(\exists x \; (\mathit{woman}(x) \to \mathit{strong}(x))\) says that there is something with the property that if it is a woman, then it is strong. Classically this is equivalent to saying that there is something which is either not a woman or is strong, which is a funny thing to say.

Now, suppose we are studying geometry, and we want to express the fact that given any two distinct points \(p\) and \(q\) and any two lines \(L\) and \(M\), if \(L\) and \(M\) both pass through \(p\) and \(q\), then they have to be the same. (In other words, there is at most one line between two distinct points.) One option is to design a first-order logic where the intended universe is big enough to include both points and lines, and use relativization:

But dealing with such predicates is tedious, and there is a mild extension of first-order logic, called many-sorted first-order logic, which builds in some of the bookkeeping. In many-sorted logic, one can have different sorts of objects—such as points and lines—and a separate stock of variables and quantifiers ranging over each. Moreover, the specification of function symbols and predicate symbols indicates what sorts of arguments they expect, and, in the case of function symbols, what sort of argument they return. For example, we might choose to have a sort with variables \(p, q, r, \ldots\) ranging over points, a sort with variables \(L, M, N, \ldots\) ranging over lines, and a relation \(\mathit{on}(p, L)\) relating the two. Then the assertion above is rendered more simply as follows:

7.5. Equality¶

In symbolic logic, we use the expression \(s = t\) to express the fact that \(s\) and \(t\) are “equal” or “identical.” The equality symbol is meant to model what we mean when we say, for example, “Alice’s brother is the victim,” or “2 + 2 = 4.” We are asserting that two different descriptions refer to the same object. Because the notion of identity can be applied to virtually any domain of objects, it is viewed as falling under the province of logic.

Talk of “equality” or “identity” raises messy philosophical questions, however. Am I the same person I was three days ago? Are the two copies of Huckleberry Finn sitting on my shelf the same book, or two different books? Using symbolic logic to model identity presupposes that we have in mind a certain way of carving up and interpreting the world. We assume that our terms refer to distinct entities, and writing \(s = t\) asserts that the two expressions refer to the same thing. Axiomatically, we assume that equality satisfies the following three properties:

reflexivity: \(t = t\), for any term \(t\)

symmetry: if \(s = t\), then \(t = s\)

transitivity: if \(r = s\) and \(s = t\), then \(r = t\)

These properties are not enough to characterize equality, however. If two expressions denote the same thing, then we should be able to substitute one for any other in any expression. It is convenient to adopt the following convention: if \(r\) is any term, we may write \(r(x)\) to indicate that the variable \(x\) may occur in \(r\). Then, if \(s\) is another term, we can thereafter write \(r(s)\) to denote the result of replacing \(s\) for \(x\) in \(r\). The substitution rule for terms thus reads as follows: if \(s = t\), then \(r(s) = r(t)\).

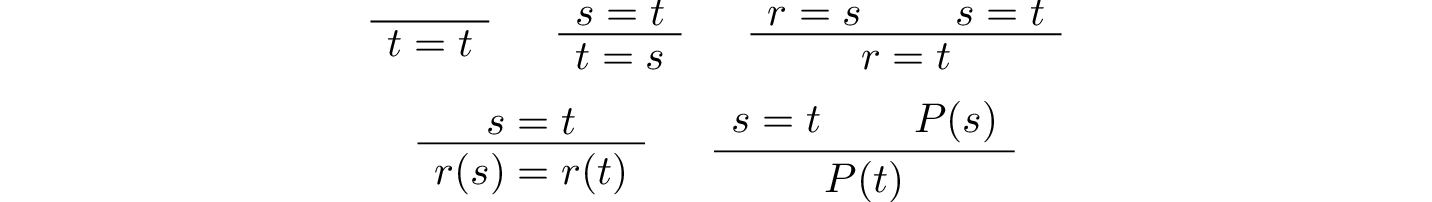

We already adopted a similar convention for formulas: if we introduce a formula as \(A(x)\), then \(A(t)\) denotes the result of substituting \(t\) for \(x\) in \(A\). With this in mind, we can write the rules for equality as follows:

Here, the first substitution rule governs terms and the second substitution rule governs formulas. In the next chapter, you will learn how to use them.

Using equality, we can define even more quantifiers.

We can express “there are at least two elements \(x\) such that \(A(x)\) holds” as \(\exists x \; \exists y \; (x \neq y \wedge A(x) \wedge A(y))\).

We can express “there are at most two elements \(x\) such that \(A(x)\) holds” as \(\forall x \; \forall y \; \forall z \; (A(x) \wedge A(y) \wedge A(z) \to x = y \vee y = z \vee x = z)\). This states that if we have three elements \(a\) for which \(A(a)\) holds, then two of them must be equal.

We can express “there are exactly two elements \(x\) such that \(A(x)\) holds” as the conjunction of the above two statements.

As an exercise, write out in first order logic the statements that there are at least, at most, and exactly three elements \(x\) such that \(A(x)\) holds.

In logic, the expression \(\exists!x \; A(x)\) is used to express the fact that there is a unique \(x\) satisfying \(A(x)\), which is to say, there is exactly one such \(x\). As above, this can be expressed as follows:

The first conjunct says that there is at least one object satisfying \(A\), and the second conjunct says that there is at most one. The same thing can be expressed more concisely as follows:

You should think about why this second expression works. In the next chapter we will see that, using the rules of natural deduction, we can prove that these two expressions are equivalent.

7.6. Exercises¶

A perfect number is a number that is equal to the sum of its proper divisors, that is, the numbers that divide it, other than itself. For example, 6 is perfect, because \(6 = 1 + 2 + 3\).

Using a language with variables ranging over the natural numbers and suitable functions and predicates, write down first-order sentences asserting the following. Use a predicate \(\mathit{perfect}\) to express that a number is perfect.

28 is perfect.

There are no perfect numbers between 100 and 200.

There are (at least) two perfect numbers between 200 and 10,000. (Express this by saying that there are perfect numbers \(x\) and \(y\) between 200 and 10,000, with the property that \(x \neq y\).)

Every perfect number is even.

For every number, there is a perfect number that is larger than it. (This is one way to express the statement that there are infinitely many perfect numbers.)

Here, the phrase “between \(a\) and \(b\)” is meant to include \(a\) and \(b\).

By the way, we do not know whether the last two statements are true. They are open questions.

Using a language with variables ranging over people, and predicates \(\mathit{trusts}(x,y)\), \(\mathit{politician}(x)\), \(\mathit{crazy(x)}\), \(\mathit{knows}(x, y)\), \(\mathit{related\mathord{\mbox{-}}to}(x, y)\), and \(\mathit{rich}(x)\), write down first-order sentences asserting the following:

Nobody trusts a politician.

Anyone who trusts a politician is crazy.

Everyone knows someone who is related to a politician.

Everyone who is rich is either a politician or knows a politician.

In each case, some interpretation may be involved. Notice that writing down a logical expression is one way of helping to clarify the meaning.